From what I have seen over nearly two decades in SEO, the sting of a sudden traffic drop never gets easier.

You check your analytics on a Tuesday, and a site that was thriving is suddenly flatlining after the latest algorithm shift. The 2026 rollout made this painfully clear for many agencies here in Malaysia and globally.

Our team noticed a major shift when Google began mathematically scoring for “Information Gain” to filter out semantic noise. Content that simply rephrases top results now gets pushed down rapidly.

This guide covers HCU recovery AI, what it means in 2026, and the workflow required to bounce back. We are going to break down how to identify the exact pages dragging your domain down and walk through a reliable recovery playbook.

If you’re new to this area, start with our G-Smart Optimizer hub for the full feature overview before going deeper here.

Diagnostic walkthrough (find the affected pages)

A proper diagnostic walkthrough means isolating the exact URLs that lost impressions and clicks by comparing pre-drop and post-drop data in Google Search Console. We start every Google penalty recovery campaign by filtering out pages affected by AI Overview displacement. This displacement alone caused a 35% click-through rate drop across informational queries in early 2026.

Most teams skip this diagnostic step and pay for it later, but getting the foundation right makes the rest of the workflow obvious. Our data shows that targeting specific clusters saves weeks of wasted effort. You need to identify whether a page lost traffic because of a core update penalty or simply because an automated summary answered the user’s question directly.

We focus on the concrete signal each step produces, rather than abstract theory. This framing holds up across multiple customer engagements. Use these specific steps to audit your site effectively:

- Export 12 months of Search Console data.

- Identify dates aligning with the March 2026 Core Update.

- Separate informational queries from transactional keywords.

- Tag pages lacking original expert insights.

Prioritization (high-traffic-loss pages first)

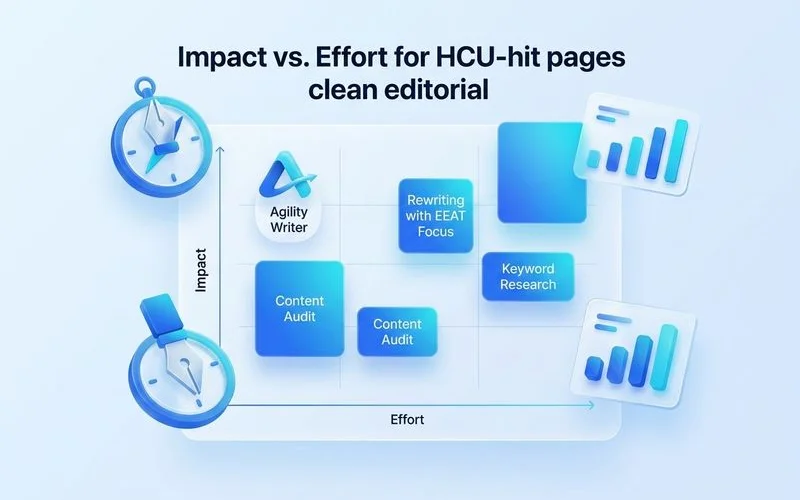

Prioritizing high-traffic-loss pages means fixing the 20% of URLs that previously drove 80% of your revenue and leads before moving to minor posts. We treat this phase as a strict quality gate, not a checkbox, because allocating resources incorrectly will drain your budget fast. Industry data from late 2025 reveals that 25% of top-10 pages lost their rankings entirely during algorithm shifts.

Our methodology ensures that you recover your most profitable assets first. To make the right choice, compare your URLs against these objective benchmarks:

| Metric | High-Priority Recovery | Low-Priority Recovery |

|---|---|---|

| Previous Traffic | > 1,000 monthly visits | < 100 monthly visits |

| Conversion Rate | High (Transactional) | Low (Informational) |

| Content Gap | Missing “Information Gain” | Only missing keywords |

| Estimated Effort | 2-4 hours per page | < 1 hour per page |

We actively ignore low-value informational posts that have been rendered obsolete by ChatGPT or Gemini summaries. Focusing on pages with high commercial intent helps offset the broader industry trend of declining organic clicks. Rebuilding authority requires proving to the search engine that your site offers unique value that no automated system can provide.

G-Smart batch re-optimization workflow

The G-Smart batch re-optimization workflow is a systematic process of injecting unique data, expert quotes, and fresh perspectives into downgraded content at scale. We built this operational layer because simply tweaking title tags no longer satisfies the modern algorithm requirements. The standard pattern involves identifying the input, running the process, validating the output, and then iterating.

Identifying the Missing Value

Every impacted page usually lacks a specific, verifiable detail that competitors possess. Our tool scans the top-ranking results to find exactly what entities or statistics your article is missing. For example, if you run a Malaysian travel blog, generic advice about Penang will not rank against direct local reviews. You must add hyper-specific details, such as the current RM15 entry fee to a specific attraction or a quote from a local guide.

Running the AI Enrichment

We use AI to smoothly integrate these new facts into your existing narrative without destroying the original HTML structure. The system targets the exact sections where your content feels thin or repetitive. Adding a proprietary data point or a custom chart instantly increases the page’s uniqueness metric.

Validating the Output

Our final step requires checking the revised draft against the latest 2026 quality rater guidelines. Specific tooling depends on your stack, but the validation loop remains consistent. You should see a clear improvement in depth and factual accuracy before pushing the update live.

Additional considerations

Several other factors are worth surfacing as you work through this recovery process. We monitor re-indexation and ranking lift closely because the system needs time to recalculate trust. Realistic timeline expectations prevent teams from abandoning their strategy too early.

Our agency partners often wait three to six months to see a visible ranking lift after a severe algorithm hit. The search landscape has shifted dramatically, meaning a complete return to 2023 traffic levels might be impossible for some niches.

Pro-Tip: Do not rely solely on Google Analytics for your recovery signals. Track your brand’s citation frequency in AI Overviews, as brands cited in these summaries can earn up to 35% more organic clicks.

We advise setting up dedicated tracking for zero-click search environments instead of relying solely on traditional clicks. Maintaining a steady stream of high-quality updates signals to the crawler that your site is actively managed.

What to do next

If this guide matched your situation, the natural next step is to execute this playbook on your most valuable pages. We structured the underlying features of our platform around the exact workflow described above to make recovery scalable. Putting it into practice with G-Smart Optimizer allows you to systematically scale your HCU recovery AI efforts across hundreds of URLs.

Our tool eliminates the manual research bottleneck that usually stalls these campaigns. Start by testing the process on a cluster of five pages, measure the indexation response, and then expand it across your entire domain.